LLM Coding Failure Patterns

This is a running log of recurring failure patterns that I have encountered when using LLMs for coding work — across GitHub Copilot, Cursor, Claude Code, and others. These are not bugs but tendencies that appear across tools and models. Knowing them makes reviews faster. Each pattern appeared multiple times; some may fade with newer models.

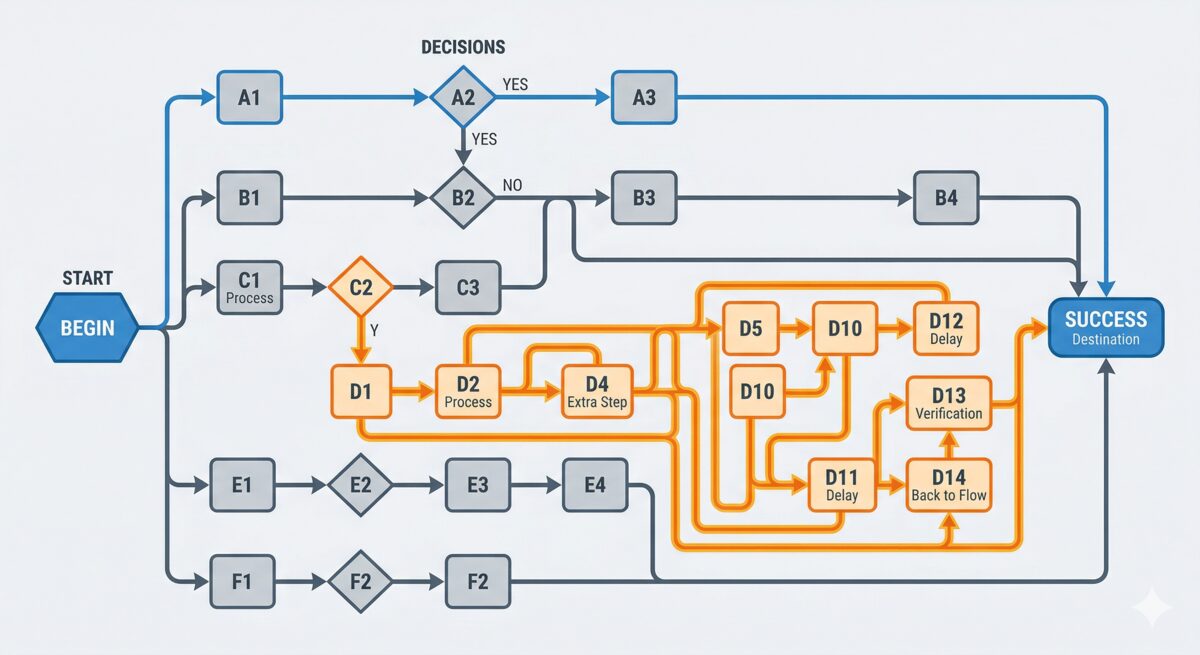

Over-engineering — solves the general case, not the specific one

Given a concrete task, an LLM often solves the abstract version of it. It does not reuse the helper function that already exists elsewhere in the codebase — it writes a new one. It adds parameters for flexibility nobody asked for. It handles edge cases that do not exist in the codebase.

The output is often correct but not appropriate. The feedback I give most often is to make as few changes as possible, keep it short, and reuse functions that are already there.

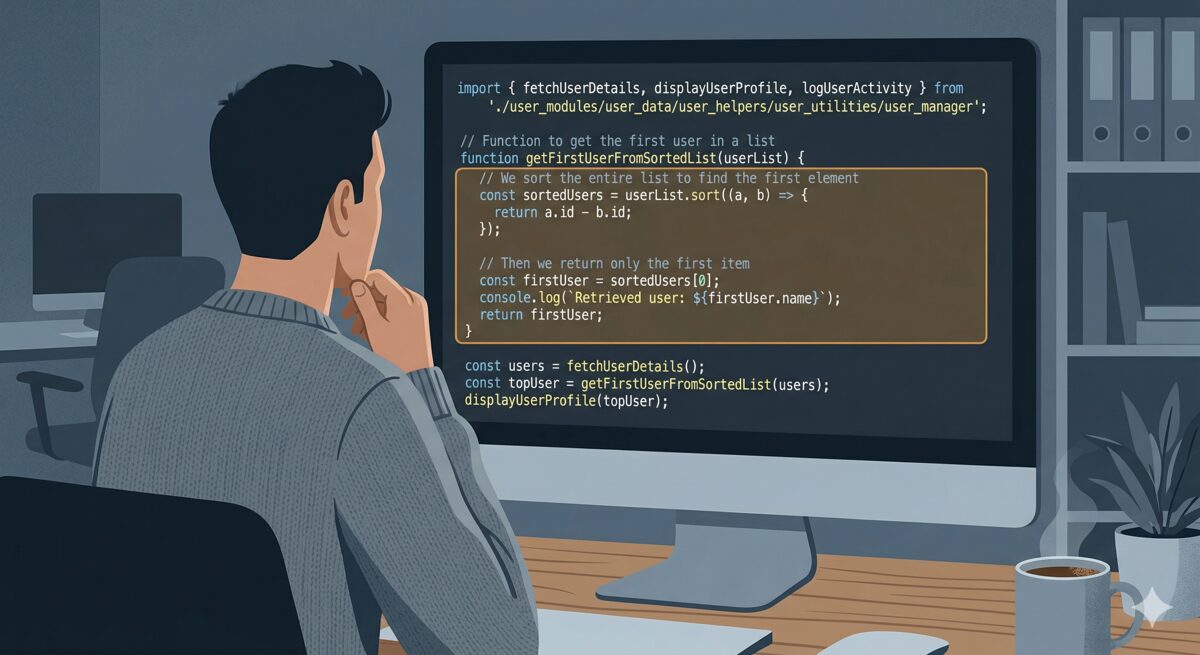

Clumsy solutions — right answer, wrong path

Sometimes the logic works but the approach is not very efficient. A concrete example: getting the maximum date

from a DataFrame by sorting the column and taking the last row, instead of calling .max().

It arrives at the right answer via the scenic route.

Token budget surprises — runs out mid-flow

Monthly caps hit faster than you expect on an active project. You start a session with enthusiasm and hit a wall mid-task — sometimes mid-edit, leaving the file in an inconsistent state.

It is not a model failure but a provider-imposed limitation — one worth keeping in mind. Starting big tasks early in a billing cycle and keeping local snapshots helps. Keeping track of remaining tokens and having fallback options ready also helps — a different model, the web interface, or a local model via Ollama.

Token leak / runaway output

An endless stream of <s> tokens from GitHub Copilot — a special token leaking into

the completion output, repeated until the session was killed. The only fix was to close and restart.

Rare, but a useful reminder that there is a probabilistic system under the hood. When a model starts producing obviously malformed output, stop and restart rather than trying to work around it.

Unit tests — confident but shallow

When I started using GitHub Copilot (2022), I mainly used it for code completion. But for actual code generation, unit tests were the main use case — and it is genuinely useful for that. The tests can be overly complex or too obvious though: they test a clean, trivial input that will always pass, rather than the edge cases that actually break things.

Guide it explicitly: ask for edge cases, null inputs, boundary values — the default tends toward the happy path. I also often ask it to add a description of what each test is actually verifying.

Infinite reviewer — always finds something new

Asking an LLM to review code or text, fixing what it finds, and then reviewing again will keep producing new feedback. The second round surfaces things the first missed. The third finds more. It does not seem to converge quickly — it keeps finding things.

I observed this with the GitHub Copilot PR reviewer (re-requesting a review after addressing comments) and with Claude when iterating on document drafts. Both kept finding issues across multiple rounds, none of which overlapped much with the previous ones.

Two practical consequences: first, it is expensive — tokens and time — if you loop this unchecked. Second, and more importantly, it can pull the output away from your original intent. Each iteration optimises for what the reviewer flags, not for your goal. The document or the code starts to reflect the model's preferences rather than yours.

The most important check is your own judgement: does the critique actually make sense? Not every flag is worth acting on. If you disagree with a suggestion, trust that — do not apply it just because the model flagged it. Beyond that: limit the number of review rounds upfront, and be explicit in the prompt about what is in scope and what is already settled.

Alex Goldhoorn is a freelance Senior Data Scientist. Find more at goldhoorn.net.